Gartner estimates that poor data quality costs organizations an average of $12.9 million per year. IBM pegged the total cost to the US economy at $3.1 trillion annually. These numbers are so large they feel abstract. But bad data costs are real, and they show up in your organization every day in ways that are measurable if you know where to look. This article breaks down the specific cost categories, shows you how to estimate the impact in your own organization, and prioritizes the fixes that deliver the most value.

Where Bad Data Costs Hide

Bad data costs are sneaky because they rarely show up as a line item in the budget. They are embedded in other costs: wasted labor, missed revenue, failed projects, and compliance penalties. Here are the seven categories where most organizations feel the pain.

1. Manual Rework and Data Cleaning

This is the most visible cost. People spend time fixing data instead of analyzing it. A data analyst who spends 40% of their time cleaning and reconciling data before they can do actual analysis is delivering 60% of their potential value. At a $120,000 salary, that is $48,000 per year spent on cleaning instead of insight.

Common rework activities:

- Deduplicating customer records across systems

- Correcting data entry errors (misspelled names, wrong addresses, incorrect codes)

- Reconciling conflicting values between systems (the CRM says one thing, billing says another)

- Manually filling in missing fields from other sources

- Reformatting data that was entered inconsistently

To estimate this cost, survey your team: "What percentage of your time do you spend cleaning, fixing, or reconciling data?" Multiply by their fully-loaded compensation. The total is usually startling.

2. Failed or Delayed Projects

Data quality problems are a leading cause of analytics project failure. A team spends weeks building a predictive model, only to discover that the input data has systematic errors that make the model useless. A dashboard project gets delayed by two months because the underlying data sources do not agree on basic facts.

The cost here is not just the wasted project hours. It is the opportunity cost: the insights that would have been generated, the decisions that would have been informed, and the revenue that would have resulted from faster action.

3. Bad Decisions

This is the largest cost category and the hardest to measure. When decisions are based on inaccurate data, the outcomes are suboptimal. Some examples:

- Marketing misallocation: Attribution data incorrectly credits Channel A for conversions that actually came from Channel B. Marketing doubles down on Channel A and reduces spend on Channel B. Revenue declines and nobody understands why because the data says Channel A should be working.

- Inventory mistakes: Demand forecasts built on dirty sales data overestimate demand for some products and underestimate it for others. Result: overstocked warehouses on low-demand items and stockouts on high-demand items. Both cost money.

- Pricing errors: Cost data used for pricing decisions includes incorrect allocations. Some products are priced below actual cost, losing money on every sale. Other products are overpriced, losing volume to competitors.

- Customer churn: Customer health scores built on incomplete usage data miss warning signs. At-risk customers are not flagged for intervention. They churn, and the post-mortem reveals that the data to predict the churn existed but was not captured correctly.

4. Customer Experience Degradation

Bad data directly affects customer experience:

- Wrong name on a personalized email (or worse, a competitor's name)

- Duplicate mailings (wasteful and annoying)

- Incorrect billing amounts (creates support tickets and erodes trust)

- Shipping to old addresses (returned shipments and delays)

- Irrelevant product recommendations (signals that you do not understand the customer)

Each of these seems minor individually. In aggregate, they increase churn, reduce customer lifetime value, and generate support costs. A study by Experian found that 83% of companies believe inaccurate data affects their customer experience, and 66% believe it directly impacts their bottom line.

5. Compliance and Legal Risk

Regulatory frameworks like GDPR, CCPA, and HIPAA impose requirements on data accuracy, not just data security. Under GDPR, individuals have the right to have inaccurate personal data corrected. If your records are systematically inaccurate and you cannot demonstrate processes to maintain accuracy, that is a compliance gap.

Beyond regulatory fines, data quality issues create legal exposure. Inaccurate financial reporting can trigger SEC investigations. Incorrect medical records create malpractice risk. Flawed employment data creates discrimination liability.

6. Integration Failures

Modern organizations rely on data flowing between systems. When data quality is poor in the source system, every downstream system inherits the problems. A misspelled customer name in the CRM becomes a mismatched record in the billing system, which becomes a failed reconciliation in the accounting system, which becomes a manual fix by the finance team every month.

Integration failures compound: each system adds its own data quality issues on top of the inherited ones. By the time data reaches the analytics layer, it may have accumulated errors from three or four systems.

7. Wasted Technology Investment

Organizations invest in BI platforms, machine learning models, and advanced analytics expecting to extract value from their data. When the underlying data is poor, these investments underperform. A $200,000 per year BI platform delivering unreliable dashboards is not a BI problem. It is a data quality problem with an expensive symptom.

Measuring Data Quality Costs in Your Organization

Here is a practical approach to estimating your organization's data quality costs:

Step 1: Audit Time Spent on Data Cleaning

Survey everyone who works with data: analysts, data engineers, marketing operations, sales operations, finance, support. Ask two questions:

- What percentage of your work time is spent finding, fixing, or working around data quality issues?

- Can you give a specific example from the past month?

Average the percentages by role and multiply by total compensation for those roles. This gives you the direct labor cost of bad data.

Step 2: Inventory Known Data Quality Issues

Create a list of every known data quality problem. For each one, document:

- Which data source is affected

- What the specific problem is (duplicates, missing values, incorrect values, staleness)

- How many records are affected

- What downstream processes or decisions are impacted

- What workaround currently exists (if any)

This inventory alone often motivates action. Most organizations are shocked by the length of the list.

Step 3: Estimate Decision Impact

This is the hardest step but the most important. Review major decisions from the past year and ask: "Was this decision based on data? How confident are we in the data quality? What would have changed if the data were more accurate?"

You will not get precise numbers for most decisions. That is okay. Even rough estimates—"this pricing decision was based on cost data that we now know was 8% too high, which means we left approximately $150K-$200K in margin on the table"—are useful for making the case for investment.

Prioritizing Data Quality Fixes

You cannot fix everything at once. Prioritize based on two dimensions: impact (how much does this issue cost?) and fixability (how hard is the fix?).

Fix First: High Impact, Easy Fix

- Automated deduplication rules: Set up matching rules to identify and merge duplicate records. Most CRMs and data platforms support this natively.

- Required field enforcement: Prevent records from being saved without critical fields. This stops new quality issues at the source.

- Data validation rules: Reject values outside expected ranges. An order amount of -$500 or an email without an @ sign should not be accepted.

- Sync frequency increases: If dashboards are stale because data syncs weekly instead of daily, increasing frequency is often a configuration change, not a development project.

Fix Second: High Impact, Moderate Effort

- Source system standardization: Implement dropdown menus instead of free text for fields like country, industry, and product category. Standardize formats for phone numbers, addresses, and dates.

- Cross-system reconciliation: Build automated checks that compare key records across systems and flag mismatches. The customer count in the CRM should match the customer count in billing.

- Historical data cleanup: Run one-time cleanup projects on the worst offenders. Deduplicate the customer database, fill in missing fields from other sources, correct systematic errors.

Fix Third: Lower Impact or Major Effort

- Master data management: Designate a single source of truth for each entity type and build sync processes so all systems stay aligned. This is architecturally correct but operationally complex.

- Data lineage tracking: Map how data flows from source to destination across all systems. Useful for root cause analysis but requires significant effort to build and maintain.

Preventing Bad Data

Fixing existing data quality issues is necessary but insufficient. Without prevention, you are just bailing water. Here are the most effective prevention measures:

- Validate at the point of entry. The cheapest time to fix data is when it is first entered. Field validation, dropdown menus, and required fields prevent most entry errors.

- Automate data movement. Every time a human manually copies data from one system to another, errors are introduced. APIs and integration platforms move data without introducing human error.

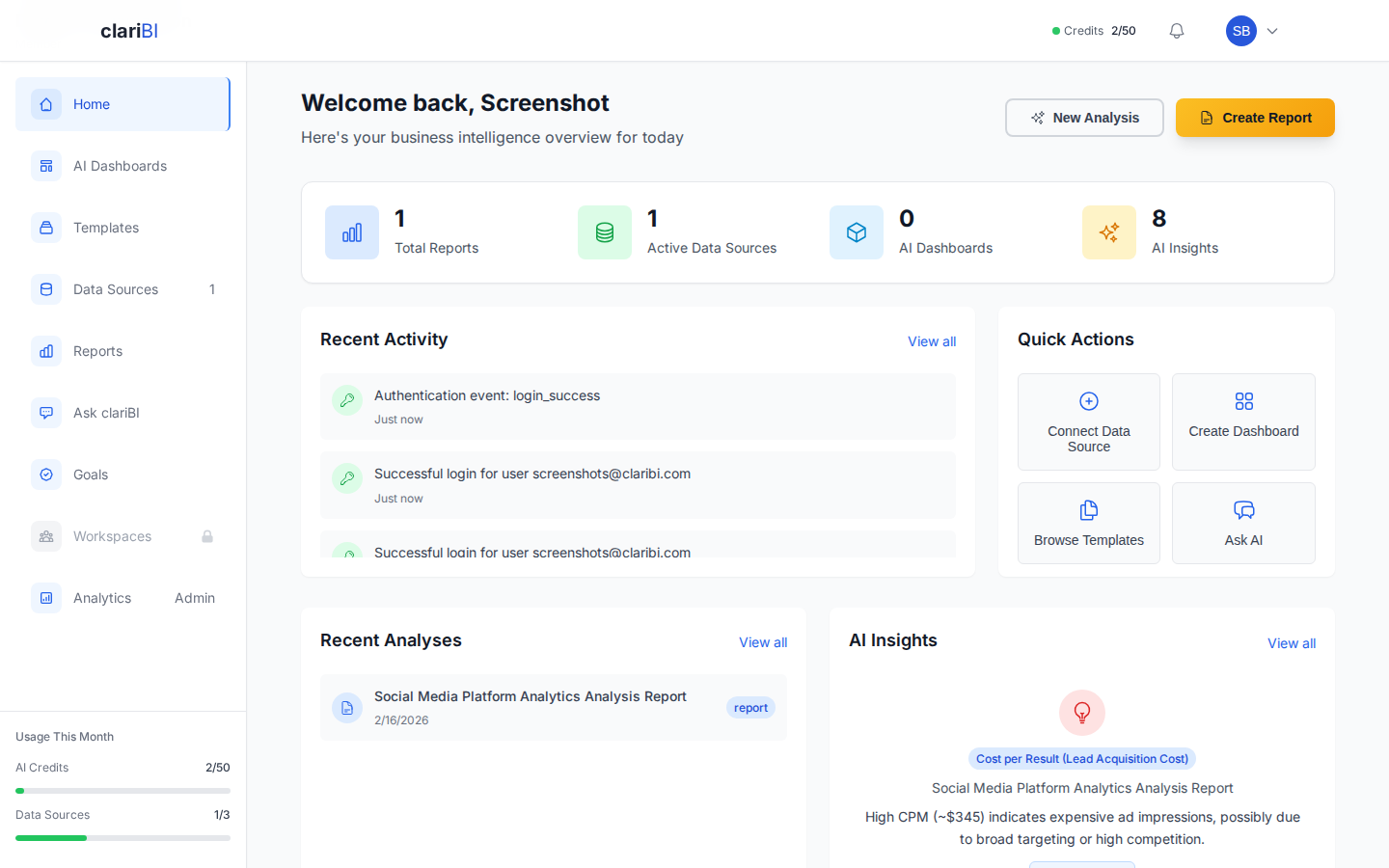

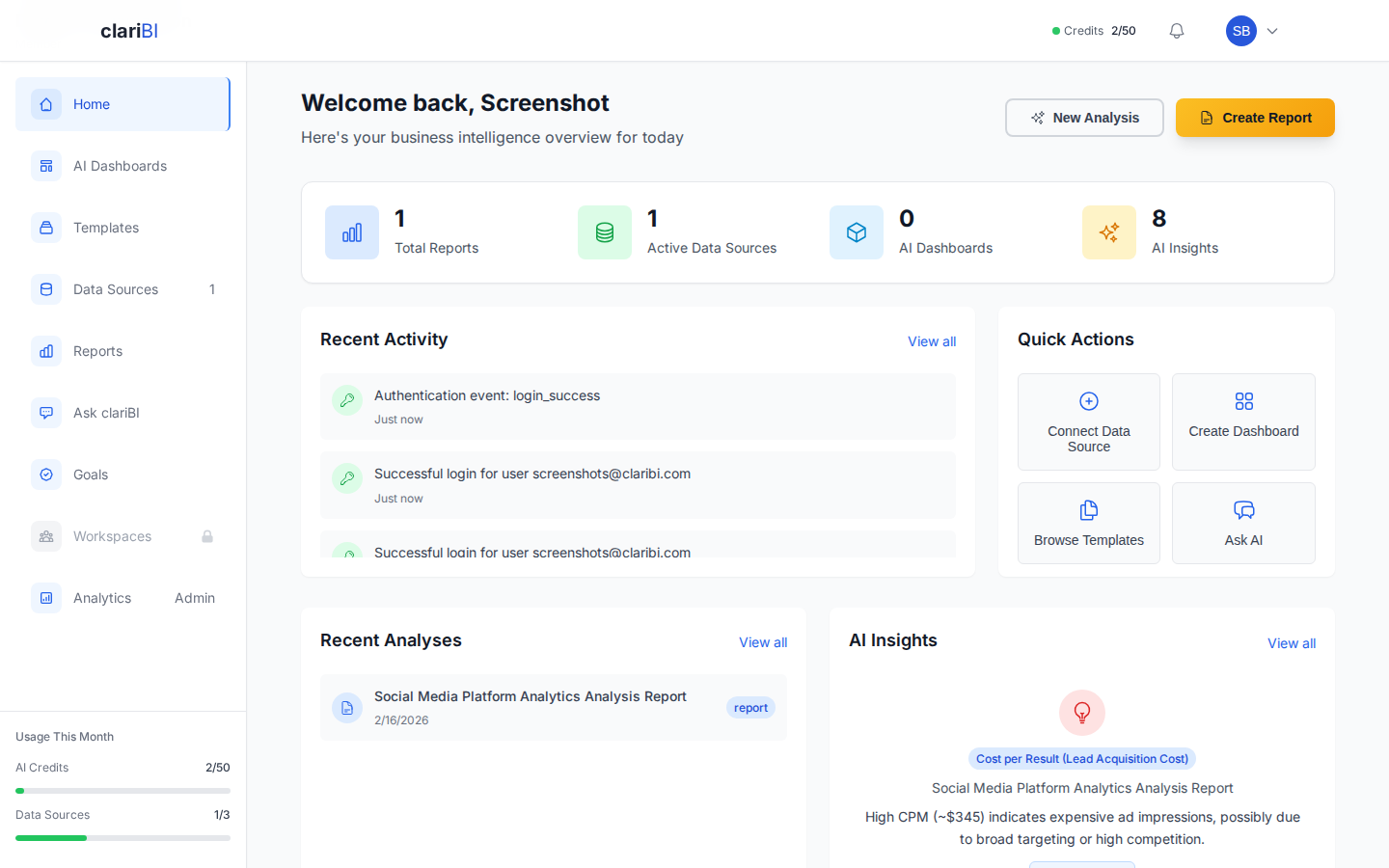

- Monitor continuously. Set up automated alerts for data quality metrics: completeness rates, duplicate rates, freshness, and anomaly detection. In clariBI, you can monitor data source sync status and freshness from the data sources management page. See the data source documentation for configuration details.

- Assign ownership. Every data source needs an owner accountable for its quality. Without ownership, data quality is everyone's problem and therefore nobody's problem.

- Train data producers. The people who enter data (sales reps, support agents, operations staff) need to understand why quality matters and how their input affects downstream decisions. Show them the dashboards that use their data. When people see how their input becomes insight, they enter data more carefully.

Making the Business Case

When presenting the cost of bad data to leadership, use this structure:

- The aggregate number: "We estimate data quality issues cost us $X per year across labor, decisions, and risk."

- Three specific examples: Choose the most compelling stories where bad data led to quantifiable costs. Make them concrete and relatable.

- The fix: "Here are the top three issues by impact and the estimated cost and timeline to fix each one."

- The expected return: "Fixing these three issues will recover an estimated $Y per year, a Z% return on the investment within 6 months."

Keep it to one page plus an appendix with details for anyone who wants to dig deeper. For ongoing data quality monitoring, clariBI's dashboard capabilities let you build a dedicated data quality dashboard that tracks completeness, freshness, and anomalies across your connected data sources. Visit the dashboard creation guide to get started.

Conclusion

Bad data costs are real, large, and largely invisible until you look for them. The organizations that quantify these costs and invest in prevention and remediation gain a compounding advantage: their decisions get better, their operations get more efficient, and their technology investments deliver their promised value. The first step is measurement. Audit your data quality costs using the framework above, prioritize the highest-impact fixes, and build the business case for sustained investment in data quality. The numbers will speak for themselves.