Every company claims to be "data-driven," but most are not. They collect data, build dashboards, and run reports, yet the actual decisions still get made on gut instinct and whoever argues loudest in the meeting. This article presents a practical, repeatable framework for actually using data to make better business decisions.

What Data-Driven Actually Means

Data-driven decision making does not mean every decision requires a spreadsheet. It means using evidence systematically to inform choices, reduce uncertainty, and measure outcomes. Some decisions are small enough that intuition is fine. Others are large enough that getting them wrong costs real money.

The framework below is designed for consequential decisions: pricing changes, market entry, product launches, hiring plans, budget allocation. These are the decisions where being wrong is expensive and being right is valuable.

The Six-Step Framework

Step 1: Define the Decision Clearly

Most analysis goes sideways because the question was never clearly defined. "Should we expand into Europe?" is too vague. Better: "Should we open a sales office in London in Q2 with a budget of $500K, targeting mid-market SaaS companies?"

A well-defined decision includes:

- The specific action you are considering

- The timeframe for implementation

- The resources required (budget, people, time)

- The alternatives you are choosing between

- The success criteria that would make you glad you did it

Write this down before doing any analysis. If you cannot articulate the decision clearly, you are not ready to analyze it.

Step 2: Identify What Data You Need

With a clear decision in hand, determine what evidence would change your mind in either direction. Ask yourself: "What would I need to see to feel confident saying yes? What would make me say no?"

For the London office example, you might need:

- Current revenue from UK-based customers (do we already have traction?)

- Win rates for UK deals compared to US deals (is there product-market fit?)

- Competitor presence and pricing in the UK market

- Cost of hiring in London (salary benchmarks, office costs)

- Pipeline of UK prospects in the CRM

- Payback period analysis at various growth scenarios

Separate "must-have" data from "nice-to-have" data. You will never have perfect information. The goal is to reduce uncertainty to an acceptable level, not to eliminate it entirely.

Step 3: Gather and Validate the Data

This is where most teams spend too much time. Data gathering should be time-boxed. Give yourself a deadline (one week for major decisions, one day for smaller ones) and work with what you can assemble in that window.

Key principles for data gathering:

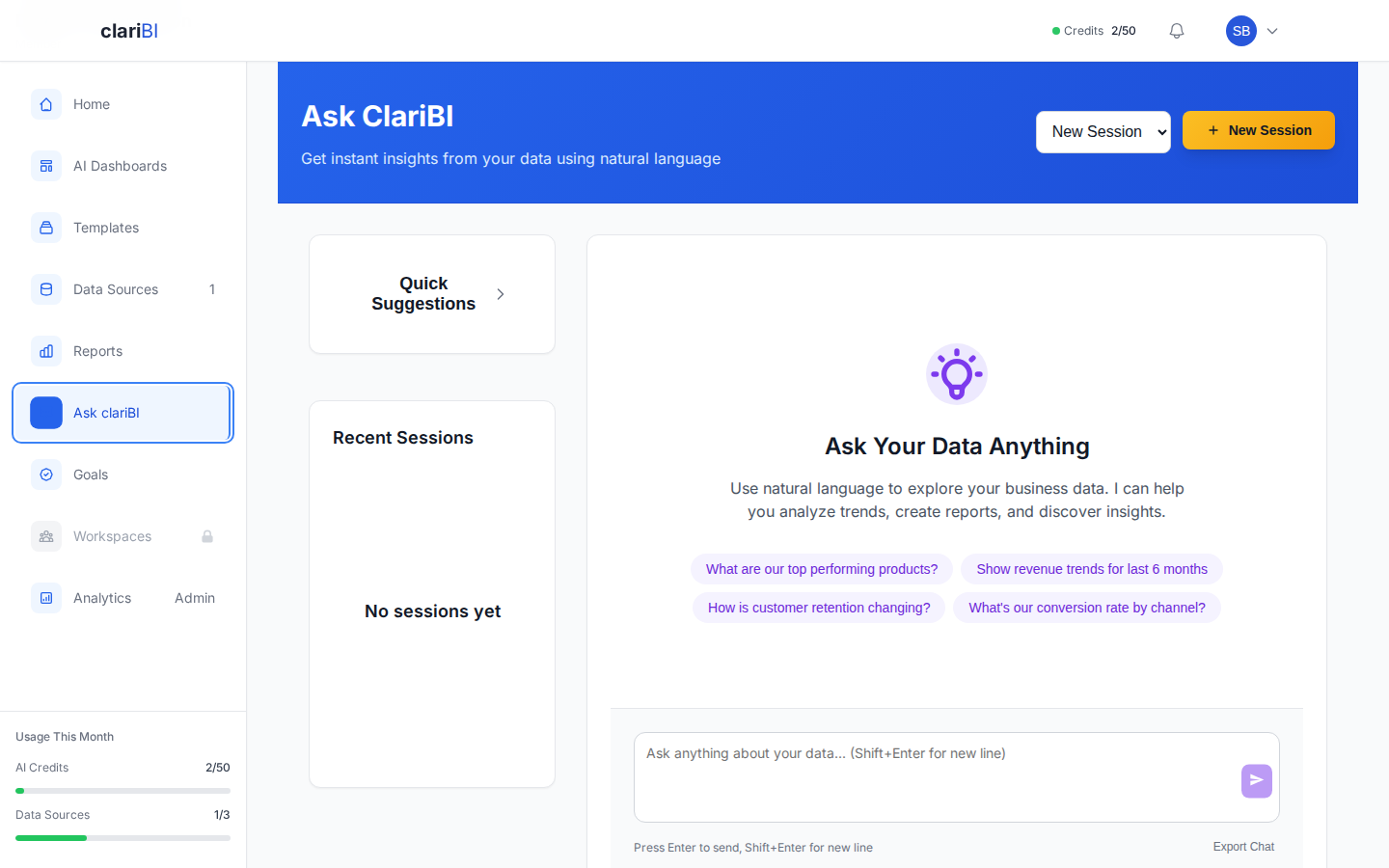

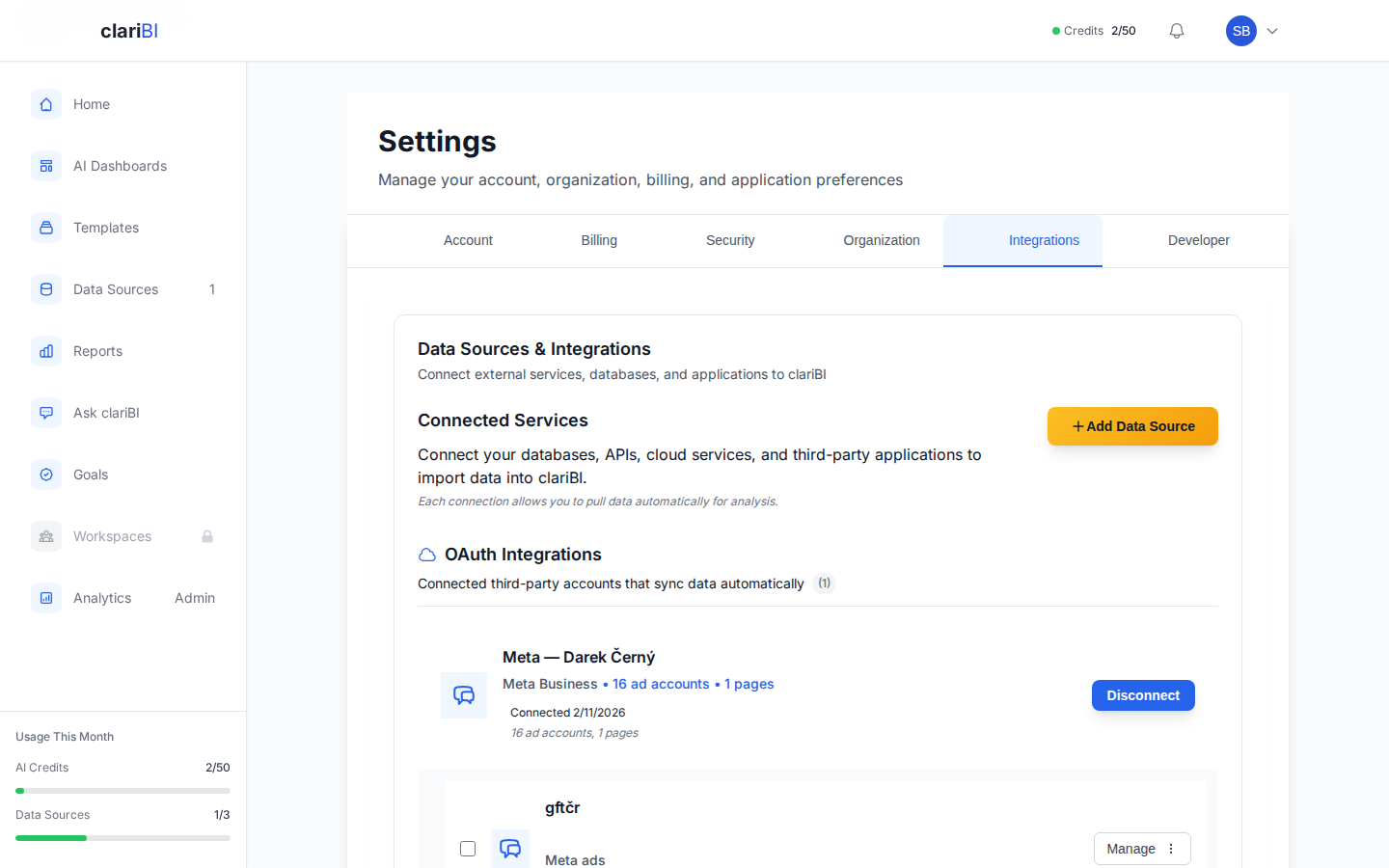

Use existing data first. You probably already have customer data, revenue data, and market research sitting in your systems. In clariBI, you can connect your CRM, billing system, and databases to pull this information into a single view. See the data connections guide for setup instructions.

Validate the data. Check for completeness, recency, and accuracy. If your CRM data is 6 months old, it may not reflect current market conditions. If your revenue numbers exclude one-time fees, the analysis will be skewed.

Document your sources. When you present findings to stakeholders, they will ask "where did this number come from?" Have an answer ready.

Step 4: Analyze the Options

With data in hand, compare your options systematically. Do not just analyze the option you favor. Analyze all alternatives with equal rigor.

Quantitative analysis: Build financial models for each option. What is the expected return? What is the worst case? What assumptions drive the biggest variance?

Sensitivity analysis: Identify the 2-3 assumptions that matter most and test different values. For the London office, the model might be most sensitive to win rate and average deal size. If either drops 20% from your assumption, does the decision change?

Qualitative factors: Not everything can be quantified. Strategic positioning, team morale, competitive dynamics, and brand perception matter. List these alongside the numbers and be explicit about how they influence the decision.

Step 5: Make the Decision and Document It

This is where frameworks often break down. The analysis is done, but the decision stalls because stakeholders want more data, or nobody wants to own the call. Set a decision deadline and stick to it.

When you make the decision, document:

- What was decided and by whom

- What data supported the decision

- What assumptions were made

- What risks were accepted

- What would cause you to revisit the decision

This documentation is not bureaucracy. It is insurance. When someone asks six months later "why did we do this?", you have a clear record. It also forces intellectual honesty at the time of decision.

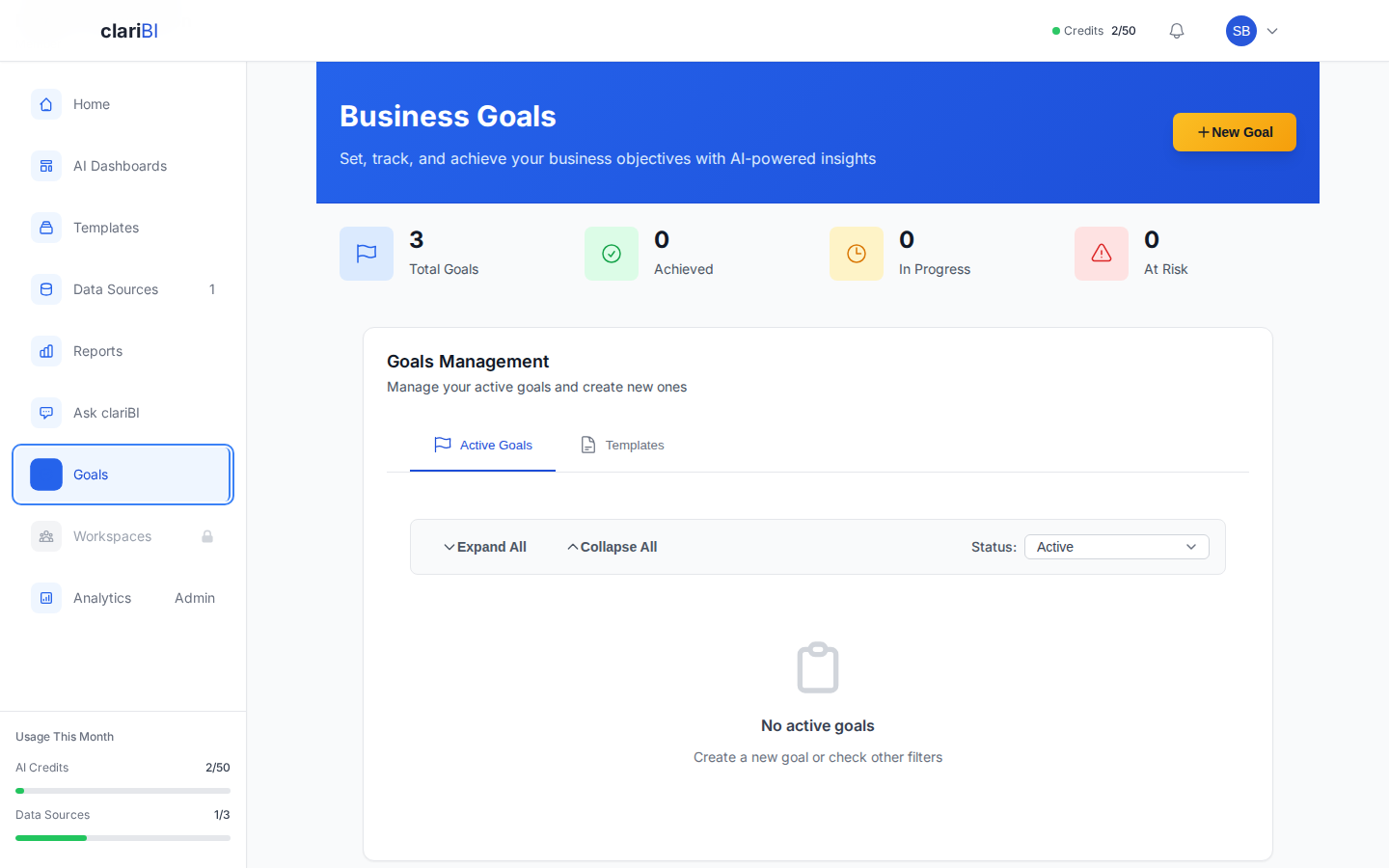

Step 6: Measure Outcomes and Learn

The step almost everyone skips. After you make a data-driven decision, measure whether the expected outcomes materialized. This closes the feedback loop and makes your next decision better.

Set up tracking before you implement. Define the metrics you will check, the timeframe for evaluation, and the thresholds for success. In clariBI, you can create a dedicated dashboard for tracking decision outcomes and share it with stakeholders for regular review.

Be honest in the retrospective. Did the data lead you to a good decision? Were your assumptions correct? What would you do differently? This learning compounds over time and is what separates organizations that are genuinely data-driven from those that just talk about it.

When NOT to Use This Framework

Not every decision deserves a formal framework. Here is a rough guide:

| Decision Type | Approach | Example |

|---|---|---|

| Low cost, easily reversible | Just decide. Use intuition. | Blog post topic, meeting agenda |

| Moderate cost, somewhat reversible | Light analysis. 30-minute data check. | Pricing a single feature, hiring a contractor |

| High cost, hard to reverse | Full framework. Structured analysis. | Market entry, major hire, platform migration |

| Existential | Full framework + external input. | Fundraising, acquisition, pivot |

The cost of analysis should be proportional to the cost of being wrong. Do not spend two weeks analyzing a $5,000 decision.

Common Traps in Data-Driven Decision Making

Confirmation Bias

You already have an opinion before the analysis starts. You unconsciously seek data that supports your position and discount data that contradicts it. Counter this by assigning someone to argue the opposite side, or by writing down your hypothesis before looking at the data and being explicit about what would disprove it.

Analysis Paralysis

More data does not always mean better decisions. At some point, additional information has diminishing returns. If you have been analyzing for more than two weeks and still feel uncertain, the problem is probably not insufficient data. It is discomfort with uncertainty. Accept that and decide.

Survivorship Bias

You study successful companies and copy their strategies without accounting for the many companies that did the same thing and failed. Be cautious about drawing conclusions from a biased sample.

Precision vs. Accuracy

A forecast of "$1,247,832 in revenue next quarter" implies false precision. "Between $1.1M and $1.4M" is more honest and more useful. Match the precision of your analysis to the uncertainty in your data.

Building a Data-Driven Culture

A framework is only useful if people follow it. Building a data-driven culture requires:

- Leadership modeling: When executives use data in their decisions publicly, teams follow.

- Access to data: If people cannot get to the data, they cannot use it. Self-service BI tools like clariBI reduce the barrier to data access. See our self-service analytics guide for more on this.

- Tolerance for bad news: If data that contradicts a leader's opinion gets shot down, people stop bringing data.

- Rewarding good process: Celebrate good analytical thinking even when the outcome is bad. Penalize poor analytical thinking even when the outcome is good. Outcomes include luck; process does not.

Data-driven decision making is not a tool or a technique. It is a habit. The more you practice it, the more natural it becomes, and the better your decisions get over time.