Your daily revenue just dropped 30%. Is that a crisis, or is it normal for a Tuesday after a holiday weekend? Anomaly detection answers this question systematically, separating genuine unusual events from expected variation. This article explains the methods behind anomaly detection, from simple statistics to machine learning, and the practical considerations that determine whether your alerts are helpful or just noisy.

What Counts as an Anomaly

An anomaly is a data point or pattern that deviates significantly from expected behavior. The key word is "expected." A 40% revenue drop on Christmas Day is not an anomaly for a B2B software company; it is predictable. But a 40% drop on a normal Wednesday would be.

This means anomaly detection requires a model of what "normal" looks like. The quality of your anomaly detection depends entirely on the quality of your normal baseline.

Method 1: Static Thresholds

The simplest approach: set a fixed upper and lower bound for a metric and alert when the value crosses it.

How It Works

Example: Daily revenue averages $50,000. Set an alert if it drops below $35,000 or exceeds $75,000.

Strengths

- Simple to implement and understand

- Easy to explain to stakeholders ("alert if revenue drops below $35K")

- No false positives from normal variation if thresholds are set well

Weaknesses

- Does not account for trends. If revenue is growing, a threshold set six months ago may be too low.

- Does not account for seasonality. Weekends are always lower, so a weekend value below the weekday threshold is not anomalous.

- Requires manual updating as business conditions change.

- Set too tight: constant false alarms. Set too loose: misses real problems.

Static thresholds work well for metrics that are genuinely stable: server uptime, error rates, or process compliance rates. They work poorly for business metrics with natural variation.

Method 2: Statistical Baselines

A statistical baseline defines "normal" using historical data and flags values that fall outside a confidence interval.

Standard Deviation Method

Calculate the mean and standard deviation of a metric over a historical window. Flag any value more than N standard deviations from the mean.

-- Example: Flag daily revenue anomalies using 2 standard deviations

WITH stats AS (

SELECT

AVG(daily_revenue) AS mean_rev,

STDDEV(daily_revenue) AS std_rev

FROM daily_metrics

WHERE metric_date BETWEEN

CURRENT_DATE - INTERVAL '90 days'

AND CURRENT_DATE - INTERVAL '1 day'

)

SELECT

d.metric_date,

d.daily_revenue,

s.mean_rev,

CASE

WHEN d.daily_revenue > s.mean_rev + 2 * s.std_rev THEN 'HIGH'

WHEN d.daily_revenue < s.mean_rev - 2 * s.std_rev THEN 'LOW'

ELSE 'NORMAL'

END AS anomaly_status

FROM daily_metrics d

CROSS JOIN stats s

WHERE d.metric_date = CURRENT_DATE;Choosing N (the Sensitivity)

- 2 standard deviations: Catches ~5% of values as anomalies. Good for initial exploration but generates many alerts.

- 2.5 standard deviations: A good balance for most business metrics. Catches ~1.2% of values.

- 3 standard deviations: Only catches ~0.3% of values. Use for metrics where false alarms are costly.

Improving Statistical Baselines

Seasonal Decomposition

Instead of comparing today's value to the overall mean, compare it to the expected value for today. Monday revenue should be compared to other Mondays. January should be compared to other Januaries.

-- Day-of-week adjusted baseline

WITH dow_stats AS (

SELECT

EXTRACT(DOW FROM metric_date) AS day_of_week,

AVG(daily_revenue) AS mean_rev,

STDDEV(daily_revenue) AS std_rev

FROM daily_metrics

WHERE metric_date >= CURRENT_DATE - INTERVAL '180 days'

GROUP BY EXTRACT(DOW FROM metric_date)

)

SELECT

d.metric_date,

d.daily_revenue,

s.mean_rev AS expected,

(d.daily_revenue - s.mean_rev) / NULLIF(s.std_rev, 0) AS z_score

FROM daily_metrics d

JOIN dow_stats s

ON EXTRACT(DOW FROM d.metric_date) = s.day_of_week

WHERE d.metric_date = CURRENT_DATE;Rolling Windows

Use a rolling window (e.g., last 90 days) instead of all historical data. This allows the baseline to adapt to trends without requiring explicit trend modeling.

Method 3: Machine Learning Approaches

For complex patterns that statistical methods miss, machine learning can build more sophisticated baselines.

Isolation Forest

This algorithm isolates anomalies by randomly partitioning data. Anomalies, being rare and different, get isolated in fewer partitions. It works well with multiple features simultaneously: flag a day that is unusual considering revenue, traffic, conversion rate, and average order value together.

Prophet / Time Series Models

Facebook's Prophet and similar time series models build baselines that account for multiple seasonality patterns (daily, weekly, annual), holidays, and trends simultaneously. The model predicts what today's value should be, and you flag significant deviations from the prediction.

Autoencoders

Neural networks that learn to compress and reconstruct normal data. When presented with anomalous data, reconstruction error increases. This approach works well when you have many metrics and the anomaly is in the relationship between metrics rather than in any single metric.

When ML Is Worth the Complexity

Use ML-based anomaly detection when:

- Your data has complex, multi-layered seasonal patterns

- You need to detect anomalies across many metrics simultaneously

- Simple statistical methods produce too many false positives

- The cost of missing a real anomaly is high

Stick with statistical methods when:

- You have a small number of key metrics to monitor

- Your data patterns are relatively simple

- You need stakeholders to understand why something was flagged

- You do not have ML expertise on the team

The False Alarm Problem

The biggest practical challenge in anomaly detection is not missing anomalies. It is generating too many false alarms. When every Monday triggers an alert because weekday baselines are not seasonally adjusted, people stop paying attention. And when they stop paying attention, they miss the real anomalies.

Strategies to Reduce False Alarms

- Use seasonal baselines: Compare to the same day of week, the same week of year, not to a global average.

- Require persistence: Flag an anomaly only if it persists for two or more consecutive periods, not just a single data point.

- Tiered severity: Use warning (2 sigma) and critical (3 sigma) levels. Notify only the right people at each level.

- Suppress known events: If you know Black Friday will spike revenue, suppress anomaly alerts for that date.

- Review and tune regularly: Check your false alarm rate monthly. If more than 20% of alerts are false positives, your thresholds need adjustment.

Building an Anomaly Detection System

Start Simple

Begin with statistical baselines for your 5-10 most important metrics. Use day-of-week adjusted baselines with a 2.5 sigma threshold. This will catch most significant anomalies with manageable noise.

Add Context Automatically

When an anomaly fires, automatically include context: related metrics, recent changes, and comparison to the same day last week and last year. This helps the recipient quickly determine if the anomaly is real.

Route Alerts Appropriately

Revenue anomalies go to finance and sales leadership. Traffic anomalies go to marketing. Error rate anomalies go to engineering. Do not send all alerts to everyone.

Close the Loop

Track what happens after an alert. Was it a real issue? Was action taken? This feedback loop improves your system over time.

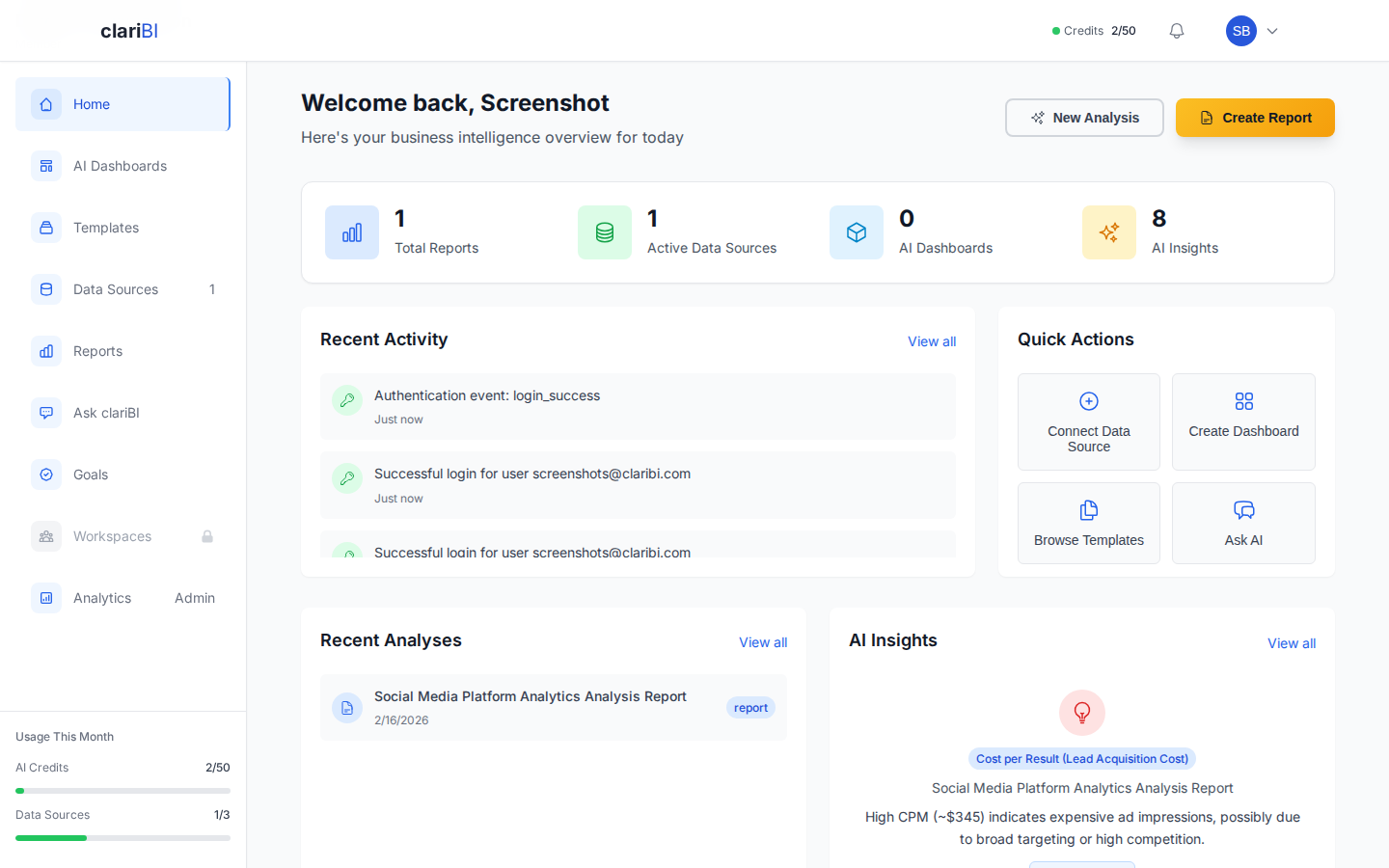

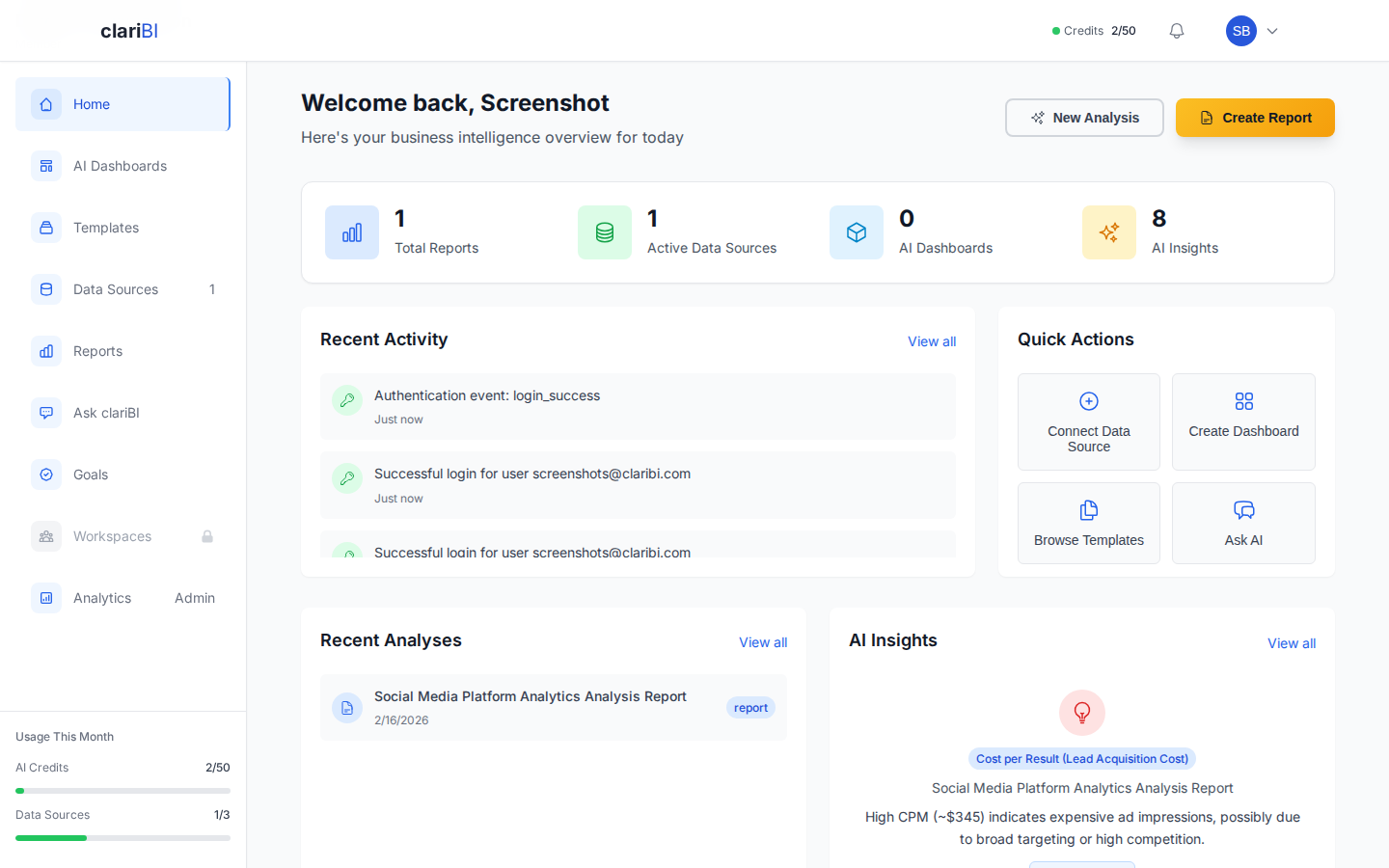

How clariBI Helps Monitor Metrics

clariBI provides several tools to help you stay on top of your key metrics:

- Dashboards with Configurable Refresh: Build dashboards that track your most important metrics and set refresh intervals to keep data current.

- AI Analysis on Demand: When you notice something unusual, use the conversational analytics interface to investigate: "What changed in the enterprise segment yesterday?" and get an AI-generated analysis of the situation.

- Scheduled Reports: Set up automated reports delivered on a regular cadence so your team reviews key metrics consistently without relying on someone to pull the data manually.

- Period Comparisons: Built-in period-over-period comparisons help you spot deviations from historical patterns and understand whether changes are significant.

Conclusion

Anomaly detection is not about finding every unusual data point. It is about finding the unusual data points that matter, quickly enough to act on them. Start with simple statistical methods, account for seasonality, tune your thresholds to minimize false alarms, and build in context so recipients can evaluate alerts without hunting for additional information. The goal is a system where every alert is worth investigating.