Bad data is expensive. A pricing decision based on incomplete revenue figures, a marketing budget allocated using double-counted conversions, a churn analysis built on customers who were not actually churned - these mistakes happen more often than anyone admits. The good news is that most data quality issues follow predictable patterns, and you can catch them systematically before they reach a dashboard or a decision.

The Cost of Bad Data

Data quality issues rarely announce themselves. Instead, they erode trust slowly. A sales VP notices the dashboard number does not match their CRM. A finance lead finds a discrepancy between two reports. The CEO gets a different revenue figure than the CFO. Each incident chips away at confidence in the data, and eventually people stop trusting any report and revert to spreadsheets and gut feel.

The direct costs are also real: mispriced products, misallocated marketing spend, incorrect commission payments, compliance reporting errors, and planning based on false assumptions. One study by Gartner estimated that poor data quality costs organizations an average of $12.9 million per year.

The Five Most Common Data Quality Issues

1. Missing Values

Null fields, empty strings, and default values that should have been populated. Common examples:

- Customer records with no industry or company size (breaks segmentation analysis)

- Orders with no attribution source (makes channel analysis incomplete)

- Invoices with no payment date (distorts revenue timing)

How to catch it: Count nulls by column on a regular schedule. If the null rate for a critical field exceeds a threshold (say 5%), flag it for investigation. A sudden spike in nulls often indicates a broken integration or a form change.

2. Duplicate Records

The same entity appearing multiple times in the dataset. This inflates counts and can double-count revenue. Common causes:

- The same customer created accounts with different email addresses

- An integration sync created duplicate records during a retry

- Manual data entry added the same contact twice with slightly different names

How to catch it: Run deduplication checks on key identifiers (email, phone, company name). Look for records that share an identifier but have different IDs. Track the duplicate rate over time - an increase often points to an integration problem.

3. Stale Data

Data that has not been updated when it should have been. Examples:

- A data pipeline that stopped running three days ago without anyone noticing

- CRM records that have not been updated since initial entry

- Inventory counts that reflect yesterday's state but not today's shipments

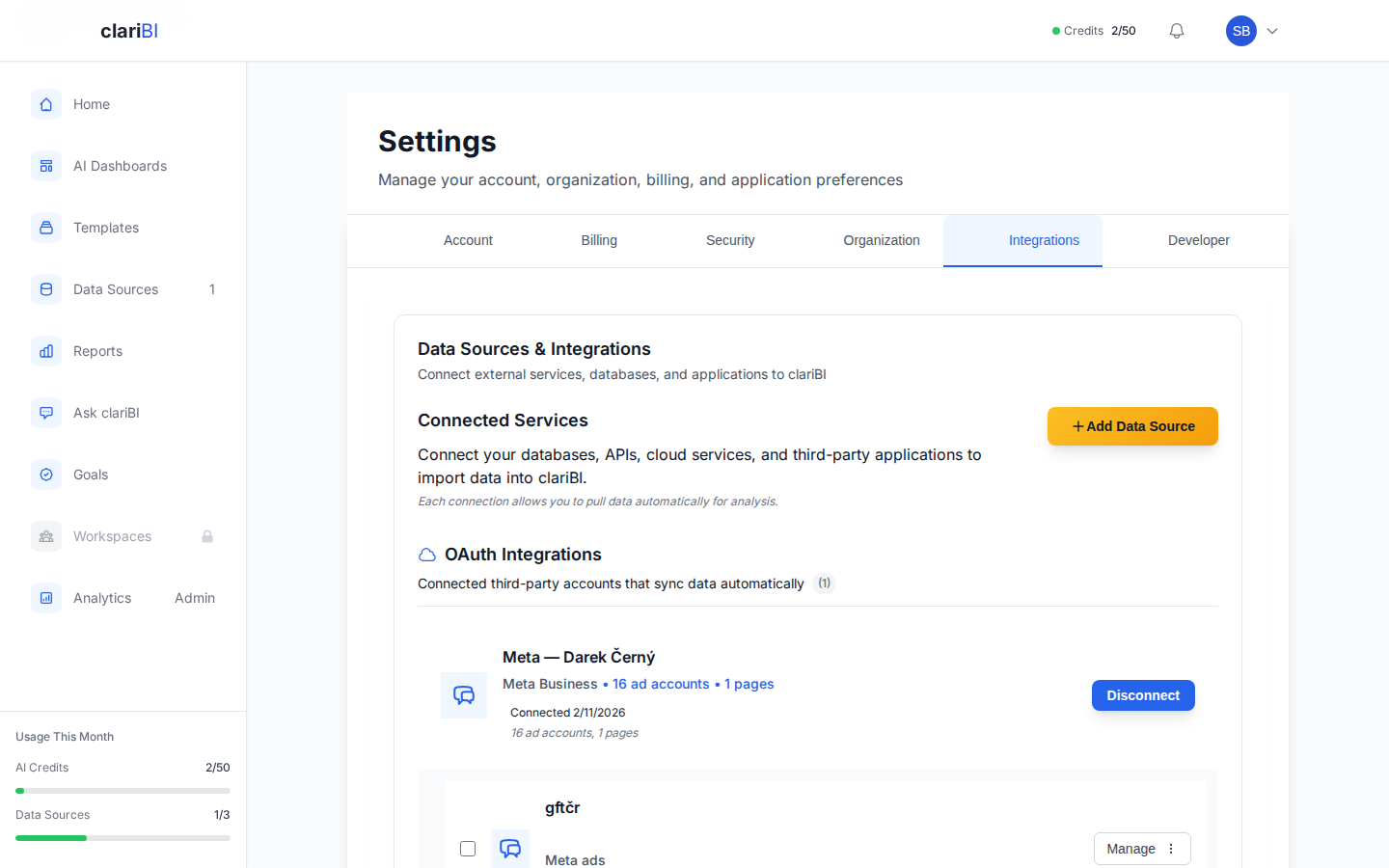

How to catch it: Track the "last updated" timestamp for each data source and each table. Set alerts when data is older than expected. In clariBI, data source health monitoring shows the last successful sync time and alerts when a connection fails or data freshness drops below your threshold.

4. Incorrect Values

Values that are present but wrong. These are the hardest to catch because they look like valid data:

- Revenue stored in the wrong currency without conversion

- Dates in the wrong timezone

- A status field that says "active" but the customer canceled last month (system lag)

- Test data mixed in with production data

How to catch it: Define expected ranges for numerical fields and flag outliers. Revenue per customer should be between $X and $Y. Order quantities should be positive. Dates should not be in the future. These boundary checks catch a surprising number of errors.

5. Schema Changes

The source system changes its data format without warning. A field gets renamed, a column type changes, a new enum value appears, or a deprecated field starts returning nulls. These changes break downstream reports and dashboards.

How to catch it: Monitor the schema of each source table. Alert when columns are added, removed, or change type. Track enum values and alert on new ones that do not match your mapping.

Building a Data Quality Monitoring System

Layer 1: Source-Level Checks

At the point where data enters your analytics system, run these checks:

- Freshness: Is the data current? When was the last record added or updated?

- Volume: Is the number of records within expected range? A sudden 50% drop in daily records suggests a broken pipeline, not a business change.

- Completeness: Are all expected fields populated? Track null rates for critical columns.

Layer 2: Metric-Level Checks

After transformation, verify that calculated metrics are reasonable:

- Range checks: Is this month's revenue within 50% of last month's? If it is 3x higher, something may be double-counted.

- Consistency checks: Does the sum of parts equal the total? Do MRR components add up to total MRR?

- Cross-source checks: Does customer count in the CRM match customer count in the billing system? Significant discrepancies indicate a sync problem.

Layer 3: Business Logic Checks

Some quality issues can only be caught by understanding the business context:

- A customer marked as "churned" who has an active subscription is a data conflict

- An invoice dated after the contract end date is suspicious

- A negative revenue value without a corresponding refund record needs investigation

Practical Implementation

Start With the Metrics That Matter Most

You do not need to monitor every field in every table. Start with the data that feeds your most important dashboards and decisions. If your leadership team reviews revenue, customer count, and churn weekly, make sure those three metrics have quality checks first.

Automate the Checks

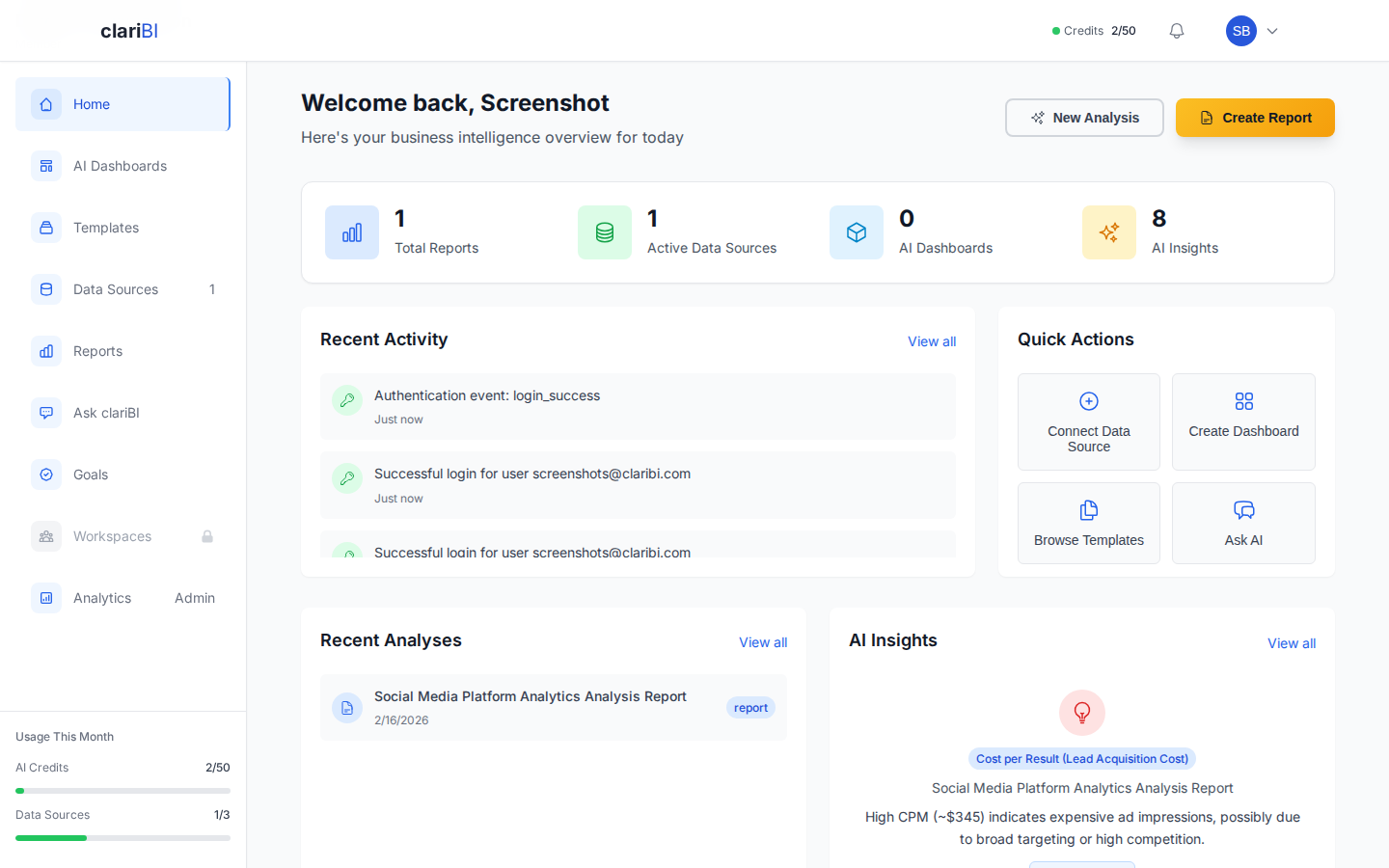

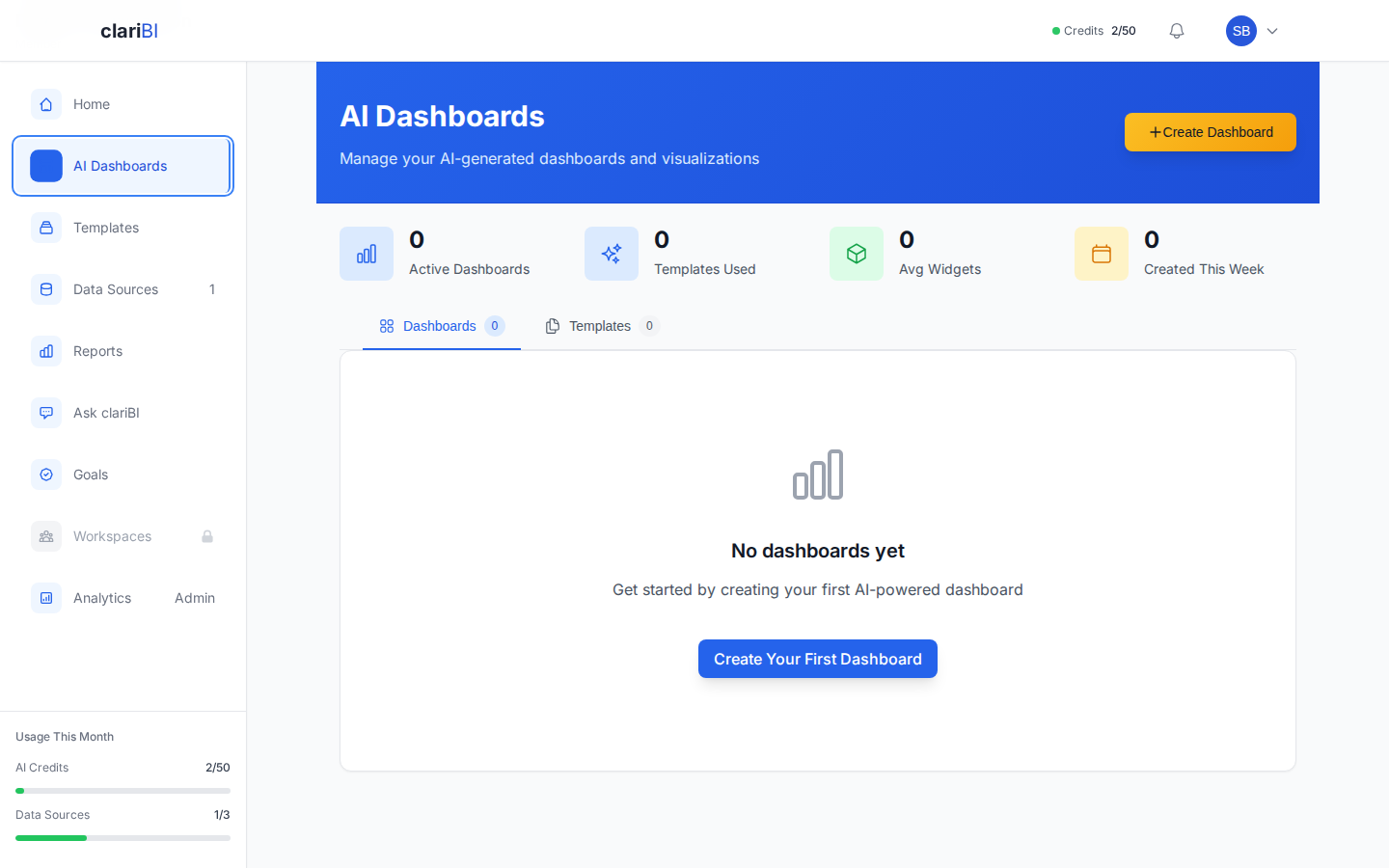

Manual data quality reviews do not scale and do not sustain. Set up automated checks that run daily and alert you when something fails. In clariBI, data source monitoring provides automated checks on connection health and data freshness. For more advanced checks, you can build quality metric dashboards that track null rates, volume anomalies, and cross-source discrepancies over time. Review the data quality monitoring guide for configuration steps.

Create an Escalation Process

When a check fails, who investigates? How quickly? Define clear ownership:

- Data freshness alert: Data engineering investigates within 4 hours

- Volume anomaly: Analytics team verifies the cause within 1 business day

- Cross-source discrepancy: Data owner for each source investigates the root cause

Track Quality Over Time

Build a data quality scorecard that shows the health of each data source and key metric. Track improvements and regressions month over month. This makes data quality visible to leadership and creates accountability for maintaining it.

Prevention Practices

Catching problems is good. Preventing them is better.

- Input validation: Enforce data types, required fields, and valid ranges at the point of entry. A form that accepts a phone number in the email field creates a problem that is much harder to fix downstream.

- Integration testing: When connecting a new data source or updating an existing integration, test with production-like data before going live. Verify that field mappings, data types, and update frequencies match expectations.

- Change communication: When a source system changes (new fields, removed fields, new enum values), the change should be communicated to the analytics team before deployment, not discovered when a dashboard breaks.

- Documentation: Document the expected schema, update frequency, and known quirks of each data source. When a new analyst joins the team, they should not have to rediscover these through painful trial and error.

Data quality is not a project with a finish date. It is a practice - a set of automated checks, clear ownership, and regular attention that prevents the slow degradation of trust in your data. Invest in quality monitoring proportional to how much you rely on data for decisions. If data drives your pricing, hiring, and strategy, treat data quality with the same seriousness as application uptime. The cost of getting it right is a fraction of the cost of a bad decision made on bad data.